Deepstream4.0的deepstream-test2程序运行和讲解-程序员宅基地

技术标签: NVIDIA 目标检测 JETSON-NANO deepstream 目标跟踪 GPU

deepstream-test2程序注释

一、首先是运行这个程序:

拷贝sample_720p.h264到deepstream-test2

cd deepstream-test2/

make

./deepstream-test2-app sample_720p.h264

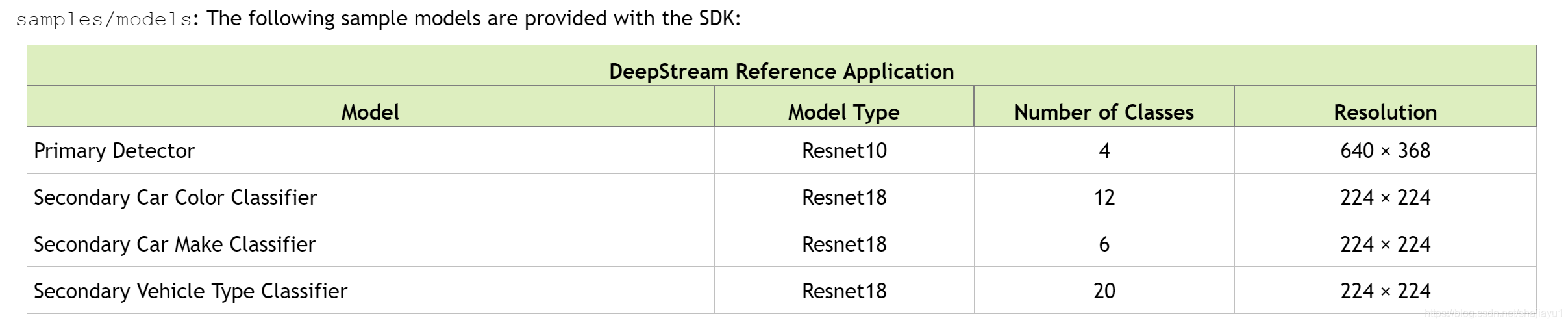

deepstream-test2相对deepstream-test1多了目标跟踪和分类。所以运行速度较慢。五个模型对视频的处理(目标跟踪模型以库的形式提供)。如下图:

运行效果如下图:

二、理解代码需要Gstreamer的知识,还需要C语言的基础

代码如下:

#include <gst/gst.h>

#include <glib.h>

#include <stdio.h>

#include <stdlib.h>

#include <string.h>

#include "gstnvdsmeta.h"

#define PGIE_CONFIG_FILE "dstest2_pgie_config.txt"

#define SGIE1_CONFIG_FILE "dstest2_sgie1_config.txt"

#define SGIE2_CONFIG_FILE "dstest2_sgie2_config.txt"

#define SGIE3_CONFIG_FILE "dstest2_sgie3_config.txt"

#define MAX_DISPLAY_LEN 64

#define TRACKER_CONFIG_FILE "dstest2_tracker_config.txt"

#define MAX_TRACKING_ID_LEN 16

#define PGIE_CLASS_ID_VEHICLE 0

#define PGIE_CLASS_ID_PERSON 2

/* The muxer output resolution must be set if the input streams will be of

* different resolution. The muxer will scale all the input frames to this

* resolution. */

#define MUXER_OUTPUT_WIDTH 1920

#define MUXER_OUTPUT_HEIGHT 1080

/* Muxer batch formation timeout, for e.g. 40 millisec. Should ideally be set

* based on the fastest source's framerate. */

#define MUXER_BATCH_TIMEOUT_USEC 4000000

gint frame_number = 0;

/* These are the strings of the labels for the respective models */

gchar sgie1_classes_str[12][32] = {

"black", "blue", "brown", "gold", "green",

"grey", "maroon", "orange", "red", "silver", "white", "yellow"

};

gchar sgie2_classes_str[20][32] =

{

"Acura", "Audi", "BMW", "Chevrolet", "Chrysler",

"Dodge", "Ford", "GMC", "Honda", "Hyundai", "Infiniti", "Jeep", "Kia",

"Lexus", "Mazda", "Mercedes", "Nissan",

"Subaru", "Toyota", "Volkswagen"

};

gchar sgie3_classes_str[6][32] = {

"coupe", "largevehicle", "sedan", "suv",

"truck", "van"

};

gchar pgie_classes_str[4][32] =

{

"Vehicle", "TwoWheeler", "Person", "RoadSign" };

/* gie_unique_id is one of the properties in the above dstest2_sgiex_config.txt

* files. These should be unique and known when we want to parse the Metadata

* respective to the sgie labels. Ideally these should be read from the config

* files but for brevity we ensure they are same. */

guint sgie1_unique_id = 2;

guint sgie2_unique_id = 3;

guint sgie3_unique_id = 4;

/* This is the buffer probe function that we have registered on the sink pad

* of the OSD element. All the infer elements in the pipeline shall attach

* their metadata to the GstBuffer, here we will iterate & process the metadata

* forex: class ids to strings, counting of class_id objects etc. */

//这是我们在OSD元素的sink pad上注册的缓冲区探测函数。

//管道中的所有推理单元都应将其元数据附加到GstBuffer,

//在这里,我们将迭代并处理元数据,显示和统计物体个数等。

static GstPadProbeReturn

osd_sink_pad_buffer_probe (GstPad * pad, GstPadProbeInfo * info,

gpointer u_data)

{

GstBuffer *buf = (GstBuffer *) info->data;

guint num_rects = 0;

NvDsObjectMeta *obj_meta = NULL;

guint vehicle_count = 0;

guint person_count = 0;

NvDsMetaList * l_frame = NULL;

NvDsMetaList * l_obj = NULL;

NvDsDisplayMeta *display_meta = NULL;

NvDsBatchMeta *batch_meta = gst_buffer_get_nvds_batch_meta (buf);

for (l_frame = batch_meta->frame_meta_list; l_frame != NULL;

l_frame = l_frame->next) {

NvDsFrameMeta *frame_meta = (NvDsFrameMeta *) (l_frame->data);

int offset = 0;

for (l_obj = frame_meta->obj_meta_list; l_obj != NULL;

l_obj = l_obj->next) {

obj_meta = (NvDsObjectMeta *) (l_obj->data);

if (obj_meta->class_id == PGIE_CLASS_ID_VEHICLE) {

vehicle_count++;

num_rects++;//统计汽车个数

}

if (obj_meta->class_id == PGIE_CLASS_ID_PERSON) {

person_count++;

num_rects++;//统计人的个数

}

}

display_meta = nvds_acquire_display_meta_from_pool(batch_meta);//要显示的文字类数据

NvOSD_TextParams *txt_params = &display_meta->text_params[0];

display_meta->num_labels = 1;

txt_params->display_text = g_malloc0 (MAX_DISPLAY_LEN);

offset = snprintf(txt_params->display_text, MAX_DISPLAY_LEN, "Person = %d ", person_count);

offset = snprintf(txt_params->display_text + offset , MAX_DISPLAY_LEN, "Vehicle = %d ", vehicle_count);

/* Now set the offsets where the string should appear */

txt_params->x_offset = 10;

txt_params->y_offset = 12;

/* Font , font-color and font-size *///字体相关的设置

txt_params->font_params.font_name = "Serif";

txt_params->font_params.font_size = 10;

txt_params->font_params.font_color.red = 1.0;

txt_params->font_params.font_color.green = 1.0;

txt_params->font_params.font_color.blue = 1.0;

txt_params->font_params.font_color.alpha = 1.0;

/* Text background color */

txt_params->set_bg_clr = 1;//背景颜色的设置

txt_params->text_bg_clr.red = 0.0;

txt_params->text_bg_clr.green = 0.0;

txt_params->text_bg_clr.blue = 0.0;

txt_params->text_bg_clr.alpha = 1.0;

nvds_add_display_meta_to_frame(frame_meta, display_meta);//显示帧数据、图像数据、文字等

}

g_print ("Frame Number = %d Number of objects = %d "

"Vehicle Count = %d Person Count = %d\n",

frame_number, num_rects, vehicle_count, person_count);

frame_number++;

return GST_PAD_PROBE_OK;

}

static gboolean//针对不同的消息类型进行相应的处理

bus_call (GstBus * bus, GstMessage * msg, gpointer data)

{

GMainLoop *loop = (GMainLoop *) data;

switch (GST_MESSAGE_TYPE (msg)) {

case GST_MESSAGE_EOS://文件流结束的处理

g_print ("End of stream\n");

g_main_loop_quit (loop);

break;

case GST_MESSAGE_ERROR:{

//错误的处理

gchar *debug;

GError *error;

gst_message_parse_error (msg, &error, &debug);

g_printerr ("ERROR from element %s: %s\n",

GST_OBJECT_NAME (msg->src), error->message);

if (debug)

g_printerr ("Error details: %s\n", debug);

g_free (debug);

g_error_free (error);

g_main_loop_quit (loop);

break;

}

default:

break;

}

return TRUE;

}

/* Tracker config parsing */

#define CHECK_ERROR(error) \

if (error) { \

g_printerr ("Error while parsing config file: %s\n", error->message); \

goto done; \

}

#define CONFIG_GROUP_TRACKER "tracker"

#define CONFIG_GROUP_TRACKER_WIDTH "tracker-width"

#define CONFIG_GROUP_TRACKER_HEIGHT "tracker-height"

#define CONFIG_GROUP_TRACKER_LL_CONFIG_FILE "ll-config-file"

#define CONFIG_GROUP_TRACKER_LL_LIB_FILE "ll-lib-file"

#define CONFIG_GROUP_TRACKER_ENABLE_BATCH_PROCESS "enable-batch-process"

#define CONFIG_GPU_ID "gpu-id"

static gchar *

get_absolute_file_path (gchar *cfg_file_path, gchar *file_path)

{

gchar abs_cfg_path[PATH_MAX + 1];

gchar *abs_file_path;

gchar *delim;

if (file_path && file_path[0] == '/') {

return file_path;

}

if (!realpath (cfg_file_path, abs_cfg_path)) {

g_free (file_path);

return NULL;

}

// Return absolute path of config file if file_path is NULL.

if (!file_path) {

abs_file_path = g_strdup (abs_cfg_path);

return abs_file_path;

}

delim = g_strrstr (abs_cfg_path, "/");

*(delim + 1) = '\0';

abs_file_path = g_strconcat (abs_cfg_path, file_path, NULL);

g_free (file_path);

return abs_file_path;

}

static gboolean

set_tracker_properties (GstElement *nvtracker)

{

gboolean ret = FALSE;

GError *error = NULL;

gchar **keys = NULL;

gchar **key = NULL;

GKeyFile *key_file = g_key_file_new ();

if (!g_key_file_load_from_file (key_file, TRACKER_CONFIG_FILE, G_KEY_FILE_NONE,

&error)) {

g_printerr ("Failed to load config file: %s\n", error->message);

return FALSE;

}//导入配置文件

keys = g_key_file_get_keys (key_file, CONFIG_GROUP_TRACKER, NULL, &error);

//返回tracker的keys

//tracker-width

//tracker-height

//gpu-id

//ll-lib-file

//ll-config-file

//enable-batch-process

CHECK_ERROR (error);

//对nvtracker进行相应的设置

for (key = keys; *key; key++) {

if (!g_strcmp0 (*key, CONFIG_GROUP_TRACKER_WIDTH)) {

gint width =

g_key_file_get_integer (key_file, CONFIG_GROUP_TRACKER,

CONFIG_GROUP_TRACKER_WIDTH, &error);

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "tracker-width", width, NULL);

} else if (!g_strcmp0 (*key, CONFIG_GROUP_TRACKER_HEIGHT)) {

gint height =

g_key_file_get_integer (key_file, CONFIG_GROUP_TRACKER,

CONFIG_GROUP_TRACKER_HEIGHT, &error);

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "tracker-height", height, NULL);

} else if (!g_strcmp0 (*key, CONFIG_GPU_ID)) {

guint gpu_id =

g_key_file_get_integer (key_file, CONFIG_GROUP_TRACKER,

CONFIG_GPU_ID, &error);

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "gpu_id", gpu_id, NULL);

} else if (!g_strcmp0 (*key, CONFIG_GROUP_TRACKER_LL_CONFIG_FILE)) {

char* ll_config_file = get_absolute_file_path (TRACKER_CONFIG_FILE,

g_key_file_get_string (key_file,

CONFIG_GROUP_TRACKER,

CONFIG_GROUP_TRACKER_LL_CONFIG_FILE, &error));

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "ll-config-file", ll_config_file, NULL);

} else if (!g_strcmp0 (*key, CONFIG_GROUP_TRACKER_LL_LIB_FILE)) {

char* ll_lib_file = get_absolute_file_path (TRACKER_CONFIG_FILE,

g_key_file_get_string (key_file,

CONFIG_GROUP_TRACKER,

CONFIG_GROUP_TRACKER_LL_LIB_FILE, &error));

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "ll-lib-file", ll_lib_file, NULL);

} else if (!g_strcmp0 (*key, CONFIG_GROUP_TRACKER_ENABLE_BATCH_PROCESS)) {

gboolean enable_batch_process =

g_key_file_get_integer (key_file, CONFIG_GROUP_TRACKER,

CONFIG_GROUP_TRACKER_ENABLE_BATCH_PROCESS, &error);

CHECK_ERROR (error);

g_object_set (G_OBJECT (nvtracker), "enable_batch_process",

enable_batch_process, NULL);

} else {

g_printerr ("Unknown key '%s' for group [%s]", *key,

CONFIG_GROUP_TRACKER);

}

}

ret = TRUE;

done:

if (error) {

g_error_free (error);

}

if (keys) {

g_strfreev (keys);

}

if (!ret) {

g_printerr ("%s failed", __func__);

}

return ret;

}

int

main (int argc, char *argv[])

{

GMainLoop *loop = NULL;

GstElement *pipeline = NULL, *source = NULL, *h264parser = NULL,

*decoder = NULL, *streammux = NULL, *sink = NULL, *pgie = NULL, *nvvidconv = NULL,

*nvosd = NULL, *sgie1 = NULL, *sgie2 = NULL, *sgie3 = NULL, *nvtracker = NULL;

g_print ("With tracker\n");

#ifdef PLATFORM_TEGRA

GstElement *transform = NULL;

#endif

GstBus *bus = NULL;

guint bus_watch_id = 0;

GstPad *osd_sink_pad = NULL;

/* Check input arguments */

if (argc != 2) {

g_printerr ("Usage: %s <elementary H264 filename>\n", argv[0]);

return -1;

}

/* Standard GStreamer initialization */

gst_init (&argc, &argv);

loop = g_main_loop_new (NULL, FALSE);

/* Create gstreamer elements */

/* Create Pipeline element that will be a container of other elements */

pipeline = gst_pipeline_new ("dstest2-pipeline");

//通过gst_pipeline_new创建pipeline,参数为pipeline的名字。

/* Source element for reading from the file */

source = gst_element_factory_make ("filesrc", "file-source");

//创建一个element 类型是filesrc,名字是file-source。

//该element用于读取文件

/* Since the data format in the input file is elementary h264 stream,

* we need a h264parser */

h264parser = gst_element_factory_make ("h264parse", "h264-parser");

//创建一个element 类型是h264parse,名字是h264-parser。

//该element用于解析h264文件

/* Use nvdec_h264 for hardware accelerated decode on GPU */

decoder = gst_element_factory_make ("nvv4l2decoder", "nvv4l2-decoder");

//该element用于调用GPU硬件加速解码h264文件

/* Create nvstreammux instance to form batches from one or more sources. */

streammux = gst_element_factory_make ("nvstreammux", "stream-muxer");

//该element用于把输入按照参数处理成一系列的视频帧

if (!pipeline || !streammux) {

g_printerr ("One element could not be created. Exiting.\n");

return -1;

}

/* Use nvinfer to run inferencing on decoder's output,

* behaviour of inferencing is set through config file */

pgie = gst_element_factory_make ("nvinfer", "primary-nvinference-engine");

//目标检测的模型,对输入图像进行推理,通过推理的配置文件

/* We need to have a tracker to track the identified objects */

nvtracker = gst_element_factory_make ("nvtracker", "tracker");

//跟踪器 跟踪识别出来的目标

/* We need three secondary gies so lets create 3 more instances of

nvinfer */

sgie1 = gst_element_factory_make ("nvinfer", "secondary1-nvinference-engine");

//分类器--车的颜色

sgie2 = gst_element_factory_make ("nvinfer", "secondary2-nvinference-engine");

//分类器--车的厂家

sgie3 = gst_element_factory_make ("nvinfer", "secondary3-nvinference-engine");

//分类器--车的类型

/* Use convertor to convert from NV12 to RGBA as required by nvosd */

nvvidconv = gst_element_factory_make ("nvvideoconvert", "nvvideo-converter");

//视频颜色格式转换

/* Create OSD to draw on the converted RGBA buffer */

nvosd = gst_element_factory_make ("nvdsosd", "nv-onscreendisplay");

//处理RGBA buffer 绘制ROI,绘制识别对象的Bounding Box,绘制识别对象的文字标签(字体、颜色、标示框)

/* Finally render the osd output */

#ifdef PLATFORM_TEGRA

transform = gst_element_factory_make ("nvegltransform", "nvegl-transform");

// 转换成 EGLImage instance 给nveglglessink使用

#endif

sink = gst_element_factory_make ("nveglglessink", "nvvideo-renderer");

if (!source || !h264parser || !decoder || !pgie ||

!nvtracker || !sgie1 || !sgie2 || !sgie3 || !nvvidconv || !nvosd || !sink) {

g_printerr ("One element could not be created. Exiting.\n");

return -1;

}

#ifdef PLATFORM_TEGRA

if(!transform) {

g_printerr ("One tegra element could not be created. Exiting.\n");

return -1;

}

#endif

/* Set the input filename to the source element */

g_object_set (G_OBJECT (source), "location", argv[1], NULL);

//设置source的位置,source是视频文件

g_object_set (G_OBJECT (streammux), "width", MUXER_OUTPUT_WIDTH, "height",

MUXER_OUTPUT_HEIGHT, "batch-size", 1,

"batched-push-timeout", MUXER_BATCH_TIMEOUT_USEC, NULL);

//设置视频格式,如分辨率等

/* Set all the necessary properties of the nvinfer element,

* the necessary ones are : */

g_object_set (G_OBJECT (pgie), "config-file-path", PGIE_CONFIG_FILE, NULL);//目标检测的配置文件

g_object_set (G_OBJECT (sgie1), "config-file-path", SGIE1_CONFIG_FILE, NULL);//颜色分类的配置文件

g_object_set (G_OBJECT (sgie2), "config-file-path", SGIE2_CONFIG_FILE, NULL);//车厂分类的配置文件

g_object_set (G_OBJECT (sgie3), "config-file-path", SGIE3_CONFIG_FILE, NULL);//车型分类的配置文件

/* Set necessary properties of the tracker element. */

//设置目标跟踪器

if (!set_tracker_properties(nvtracker)) {

g_printerr ("Failed to set tracker properties. Exiting.\n");

return -1;

}

/* we add a message handler */

bus = gst_pipeline_get_bus (GST_PIPELINE (pipeline));

bus_watch_id = gst_bus_add_watch (bus, bus_call, loop);//指定消息处理函数

gst_object_unref (bus);

/* Set up the pipeline */

/* we add all elements into the pipeline */

/* decoder | pgie1 | nvtracker | sgie1 | sgie2 | sgie3 | etc.. */

#ifdef PLATFORM_TEGRA

gst_bin_add_many (GST_BIN (pipeline),

source, h264parser, decoder, streammux, pgie, nvtracker, sgie1, sgie2, sgie3,

nvvidconv, nvosd, transform, sink, NULL);//element加入到pipeline

#else

gst_bin_add_many (GST_BIN (pipeline),

source, h264parser, decoder, streammux, pgie, nvtracker, sgie1, sgie2, sgie3,

nvvidconv, nvosd, sink, NULL);

#endif

GstPad *sinkpad, *srcpad;

gchar pad_name_sink[16] = "sink_0";

gchar pad_name_src[16] = "src";

sinkpad = gst_element_get_request_pad (streammux, pad_name_sink);

if (!sinkpad) {

g_printerr ("Streammux request sink pad failed. Exiting.\n");

return -1;

}//获取指定element中的指定pad 该element为 streammux

srcpad = gst_element_get_static_pad (decoder, pad_name_src);

if (!srcpad) {

g_printerr ("Decoder request src pad failed. Exiting.\n");

return -1;

}

if (gst_pad_link (srcpad, sinkpad) != GST_PAD_LINK_OK) {

g_printerr ("Failed to link decoder to stream muxer. Exiting.\n");

return -1;

}

gst_object_unref (sinkpad);

gst_object_unref (srcpad);

/* Link the elements together */

if (!gst_element_link_many (source, h264parser, decoder, NULL)) {

g_printerr ("Elements could not be linked: 1. Exiting.\n");

return -1;

}

#ifdef PLATFORM_TEGRA

if (!gst_element_link_many (streammux, pgie, nvtracker, sgie1,

sgie2, sgie3, nvvidconv, nvosd, transform, sink, NULL)) {

g_printerr ("Elements could not be linked. Exiting.\n");

return -1;

}

#else

if (!gst_element_link_many (streammux, pgie, nvtracker, sgie1,

sgie2, sgie3, nvvidconv, nvosd, sink, NULL)) {

g_printerr ("Elements could not be linked. Exiting.\n");

return -1;

}

#endif

/* Lets add probe to get informed of the meta data generated, we add probe to

* the sink pad of the osd element, since by that time, the buffer would have

* had got all the metadata. */

osd_sink_pad = gst_element_get_static_pad (nvosd, "sink");

if (!osd_sink_pad)

g_print ("Unable to get sink pad\n");

else

gst_pad_add_probe (osd_sink_pad, GST_PAD_PROBE_TYPE_BUFFER,

osd_sink_pad_buffer_probe, NULL, NULL);

//osd_sink_pad_buffer_probe 创建探针

//以上都是设置属性,连接Elements,设置消息等操作,先把整个的视频处理流程勾勒出来。

/* Set the pipeline to "playing" state */

g_print ("Now playing: %s\n", argv[1]);

gst_element_set_state (pipeline, GST_STATE_PLAYING);//运行

/* Iterate */

g_print ("Running...\n");

g_main_loop_run (loop);//bus_call 消息处理函数可以结束loop

/* Out of the main loop, clean up nicely */

g_print ("Returned, stopping playback\n");

gst_element_set_state (pipeline, GST_STATE_NULL);//释放为pipeline分配的所有资源

g_print ("Deleting pipeline\n");

gst_object_unref (GST_OBJECT (pipeline));

g_source_remove (bus_watch_id);

g_main_loop_unref (loop);//销毁loop对象

return 0;

}

参考文献

[1].https://docs.nvidia.com/metropolis/deepstream/plugin-manual/index.html

[2].https://docs.nvidia.com/metropolis/deepstream/dev-guide/DeepStream_Development_Guide/baggage/index.html

智能推荐

oracle 12c 集群安装后的检查_12c查看crs状态-程序员宅基地

文章浏览阅读1.6k次。安装配置gi、安装数据库软件、dbca建库见下:http://blog.csdn.net/kadwf123/article/details/784299611、检查集群节点及状态:[root@rac2 ~]# olsnodes -srac1 Activerac2 Activerac3 Activerac4 Active[root@rac2 ~]_12c查看crs状态

解决jupyter notebook无法找到虚拟环境的问题_jupyter没有pytorch环境-程序员宅基地

文章浏览阅读1.3w次,点赞45次,收藏99次。我个人用的是anaconda3的一个python集成环境,自带jupyter notebook,但在我打开jupyter notebook界面后,却找不到对应的虚拟环境,原来是jupyter notebook只是通用于下载anaconda时自带的环境,其他环境要想使用必须手动下载一些库:1.首先进入到自己创建的虚拟环境(pytorch是虚拟环境的名字)activate pytorch2.在该环境下下载这个库conda install ipykernelconda install nb__jupyter没有pytorch环境

国内安装scoop的保姆教程_scoop-cn-程序员宅基地

文章浏览阅读5.2k次,点赞19次,收藏28次。选择scoop纯属意外,也是无奈,因为电脑用户被锁了管理员权限,所有exe安装程序都无法安装,只可以用绿色软件,最后被我发现scoop,省去了到处下载XXX绿色版的烦恼,当然scoop里需要管理员权限的软件也跟我无缘了(譬如everything)。推荐添加dorado这个bucket镜像,里面很多中文软件,但是部分国外的软件下载地址在github,可能无法下载。以上两个是官方bucket的国内镜像,所有软件建议优先从这里下载。上面可以看到很多bucket以及软件数。如果官网登陆不了可以试一下以下方式。_scoop-cn

Element ui colorpicker在Vue中的使用_vue el-color-picker-程序员宅基地

文章浏览阅读4.5k次,点赞2次,收藏3次。首先要有一个color-picker组件 <el-color-picker v-model="headcolor"></el-color-picker>在data里面data() { return {headcolor: ’ #278add ’ //这里可以选择一个默认的颜色} }然后在你想要改变颜色的地方用v-bind绑定就好了,例如:这里的:sty..._vue el-color-picker

迅为iTOP-4412精英版之烧写内核移植后的镜像_exynos 4412 刷机-程序员宅基地

文章浏览阅读640次。基于芯片日益增长的问题,所以内核开发者们引入了新的方法,就是在内核中只保留函数,而数据则不包含,由用户(应用程序员)自己把数据按照规定的格式编写,并放在约定的地方,为了不占用过多的内存,还要求数据以根精简的方式编写。boot启动时,传参给内核,告诉内核设备树文件和kernel的位置,内核启动时根据地址去找到设备树文件,再利用专用的编译器去反编译dtb文件,将dtb还原成数据结构,以供驱动的函数去调用。firmware是三星的一个固件的设备信息,因为找不到固件,所以内核启动不成功。_exynos 4412 刷机

Linux系统配置jdk_linux配置jdk-程序员宅基地

文章浏览阅读2w次,点赞24次,收藏42次。Linux系统配置jdkLinux学习教程,Linux入门教程(超详细)_linux配置jdk

随便推点

matlab(4):特殊符号的输入_matlab微米怎么输入-程序员宅基地

文章浏览阅读3.3k次,点赞5次,收藏19次。xlabel('\delta');ylabel('AUC');具体符号的对照表参照下图:_matlab微米怎么输入

C语言程序设计-文件(打开与关闭、顺序、二进制读写)-程序员宅基地

文章浏览阅读119次。顺序读写指的是按照文件中数据的顺序进行读取或写入。对于文本文件,可以使用fgets、fputs、fscanf、fprintf等函数进行顺序读写。在C语言中,对文件的操作通常涉及文件的打开、读写以及关闭。文件的打开使用fopen函数,而关闭则使用fclose函数。在C语言中,可以使用fread和fwrite函数进行二进制读写。 Biaoge 于2024-03-09 23:51发布 阅读量:7 ️文章类型:【 C语言程序设计 】在C语言中,用于打开文件的函数是____,用于关闭文件的函数是____。

Touchdesigner自学笔记之三_touchdesigner怎么让一个模型跟着鼠标移动-程序员宅基地

文章浏览阅读3.4k次,点赞2次,收藏13次。跟随鼠标移动的粒子以grid(SOP)为partical(SOP)的资源模板,调整后连接【Geo组合+point spirit(MAT)】,在连接【feedback组合】适当调整。影响粒子动态的节点【metaball(SOP)+force(SOP)】添加mouse in(CHOP)鼠标位置到metaball的坐标,实现鼠标影响。..._touchdesigner怎么让一个模型跟着鼠标移动

【附源码】基于java的校园停车场管理系统的设计与实现61m0e9计算机毕设SSM_基于java技术的停车场管理系统实现与设计-程序员宅基地

文章浏览阅读178次。项目运行环境配置:Jdk1.8 + Tomcat7.0 + Mysql + HBuilderX(Webstorm也行)+ Eclispe(IntelliJ IDEA,Eclispe,MyEclispe,Sts都支持)。项目技术:Springboot + mybatis + Maven +mysql5.7或8.0+html+css+js等等组成,B/S模式 + Maven管理等等。环境需要1.运行环境:最好是java jdk 1.8,我们在这个平台上运行的。其他版本理论上也可以。_基于java技术的停车场管理系统实现与设计

Android系统播放器MediaPlayer源码分析_android多媒体播放源码分析 时序图-程序员宅基地

文章浏览阅读3.5k次。前言对于MediaPlayer播放器的源码分析内容相对来说比较多,会从Java-&amp;gt;Jni-&amp;gt;C/C++慢慢分析,后面会慢慢更新。另外,博客只作为自己学习记录的一种方式,对于其他的不过多的评论。MediaPlayerDemopublic class MainActivity extends AppCompatActivity implements SurfaceHolder.Cal..._android多媒体播放源码分析 时序图

java 数据结构与算法 ——快速排序法-程序员宅基地

文章浏览阅读2.4k次,点赞41次,收藏13次。java 数据结构与算法 ——快速排序法_快速排序法